I ran this test on the exception column from one of my production error logs and I got this result:Īs you can see, that's a good deal of compression. However it may not present you with true data size and performance gains, especially for Innodb tables. SELECT AVG(LENGTH(COMPRESS(body))) FROM data gives good data point. In the Percona blog post, linked above, the author mentions that you can run a test on the table to see what kind of storage gains you might see:īefore going ahead with compression I usually run some checks to see how much compression will benefit you. But, I do have some data tables that just warehouse large chunks of data (ex, error log) and this seems like it could be an possible win. It's probably not appropriate for VARCHAR fields, which does limit the number of places that I could even consider using it. In any case, this demo works as expected (running ColdFusion 10).Ĭompression seems like a really interesting aspect of MySQL. This is something that I have heard in passing and my not be true or may no longer be relevant in recent releases of ColdFusion. I should mention that I have heard that ColdFusion sometimes has trouble serializing exception objects. When I catch the error, I am just passing it off to the Logger.cfc instance that subsequently serializes it as JSON (JavaScript Object Notation). #timeFormat( recentErrors.createdAt, "HH:mm:ss" )# #dateFormat( recentErrors.createdAt, "Mmm d" )# at database, it will still be serialized we will have to deserialize it ourselvesĮxperimenting With MySQL Compress() And Uncompress() Methods In ColdFusion Get recent errors to display in our error log. data that may be relevent, as part of the metaData object.

Log the error - here we can pass in the error object as well as any arbitrary For the sake of the demo, we're going to create an error on each load of the page. The page shows the most recently logged errors but, it also creates a new error on every page-load. To test out my new Logger.cfc ColdFusion component, I created a little error log viewer that self-populates. No need to trade-in SQL-injection protection for compression! Notice that we can still use the ColdFusion CFQueryParam tag in conjunction with the Compress() function. So, here's the data-table that I came up with:ĬONVERT( UNCOMPRESS( e.errorData ) USING 'utf8' ) AS errorData,ĬONVERT( UNCOMPRESS( e.metaData ) USING 'utf8' ) AS metaData, In the MySQL documentation, it recommends that the compressed data be stored in a Blob or VarBinary column type.

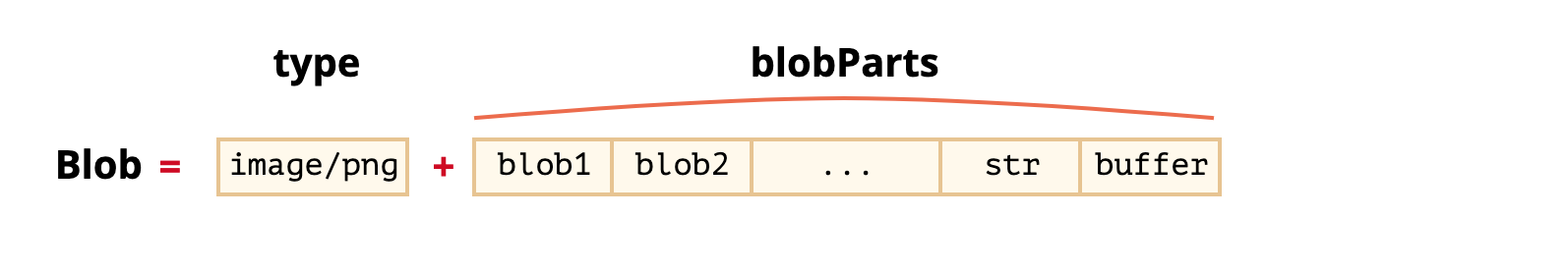

To start exploring, I want to create my data-access object - the ColdFusion component which moves data into and out of the database. After all, I'm not querying this data very often and, when I do query it, I never have to search on those "data" fields - I just have to get them out of the database and display them on the page. So, going back to the idea of an error log that has to store lots of stack traces, exception objects, and meta data, it feels like the kind of context in which data-compression would make sense. That said, I'm still interested in trying it out. But, I can point you to this Percona blog post that talks about some of the considerations in terms of performance and storage. Since this is just an experiment, and the first time that I have ever used these functions, I won't pretend to have any advice on when it's best to use them. This seems like it may be useful for tables that store a lot of long-text data, such as an error log. I had never heard of these before but, they do exactly what you think they might - compress and uncompress text values. So the question is, can I use the 'invalid_cause' and 'unarchive_cmd' in the props for Microsoft Cloud Services app? If this doesn't work I need to come up with another solution, and I'm thinking I can just copy the files locally and then run it through a standard file monitor process and attempt to run the unarchive command there.The other day, I was talking to Alexander Rubin from Percona about some MySQL optimization techniques when he mentioned that MySQL has compress() and uncompress() functions. I would not consider this option easily, it means you're trading off queriability of the data. So your best option is to compress/decompress in the client (ZIP). As a side note, if I decompress the file in Azure Blob and then ingest it, it works perfectly. SQL Server 2008 R2 has three compression options: page compression (implies row compression) All three options only apply to data (rows), so none could help with large documents (BLOBs). I know the nf is not correct or does not need that many stanzas, but I tried adding all of these in an attempt to get it to work as I'm not even sure it's using the nf file.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed